The Compliance Paradox of AI

Innovation has never moved faster and neither has risk.

Across every industry, employees are adopting AI tools organically, immediately, and almost invisibly. Nobody waits for policy. Nobody files a ticket with IT. If an AI tool helps them move faster, it’s in use before security even knows it exists.

This is Shadow AI, and in 2026, it quietly became the largest unmonitored attack surface inside most enterprises.

The paradox? – Lock AI down, and you suffocate productivity. Leave it open, and you invite regulatory, security, and datasovereignty disasters.

The organizations winning the AI race aren’t the ones blocking tools — they’re the ones observing, governing, and guiding AI usage with guardrails that unlock innovation and reduce risk.

The High-Stakes Scenarios Playing Out Today

Scenario 1: Financial Services — “A chatbot is now an endpoint”

A wealth relationship manager at a global bank decides to “save time.”

He pastes a high-net-worth client’s portfolio data into a public AI chatbot to generate a polished summary.

- The SOC detects an unusual outbound payload.

- They can’t see what was sent — the tool isn’t sanctioned, logged, or monitored.

- Weeks later, a regulator audit questions how uncontrolled data made its way into a thirdparty model.

Suddenly, the CIO is explaining how a consumer AI tool became an unmonitored dataexfiltration endpoint.

Scenario 2: Healthcare — “PHI in places you can’t defend”

A clinician uses a free AI transcription app to convert voice notes from patient rounds into structured summaries.

- The app silently stores recordings in an offshore cloud region.

- Its privacy policy is vague; its model training boundaries are unclear.

- Months later, a patient datarights inquiry forces hospital leadership to explain how sensitive PHI ended up in an AI ecosystem they don’t control.

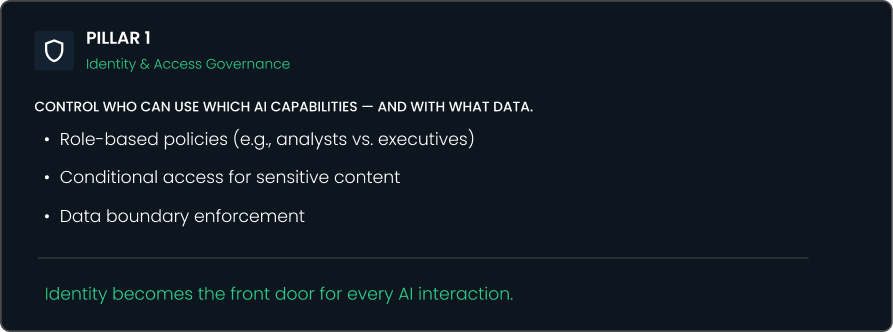

Both scenarios highlight one truth: AI tools behave like endpoints, but most enterprises haven’t secured them like endpoints.

Shadow AI = A New Class of Endpoint

AI tools represent a fundamentally different security challenge:

- They accept unstructured data at scale (text, files, screenshots, chats)

- They can exfiltrate data through a single prompt

- Their internal model boundaries are opaque

- Their data retention and training policies vary wildly

- They bypass traditional controls like DLP, VPN, and CASB

In other words, your employees are creating new AI-powered endpoints faster than security can catalog them.

This is why the old model i.e., “block everything until approved”, no longer works. And why the new model, “trust but verify, guide, and govern”, is becoming the standard.

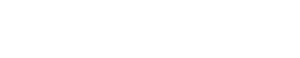

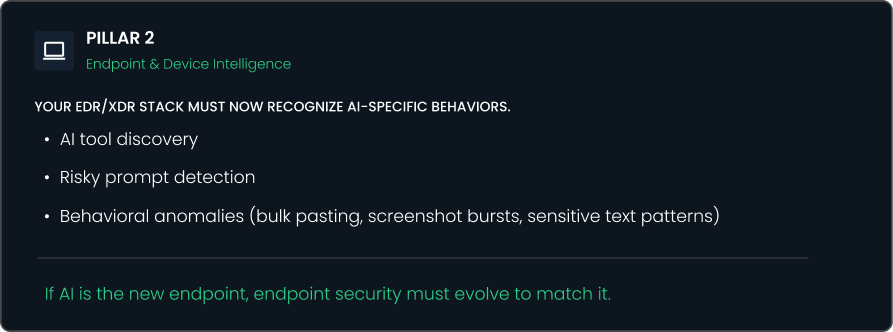

Core Pillars of Securing AI Usage

Emerging Threat Patterns We See Most Often

Here are the common patterns emerging among Celestial’s enterprise customers:

- Data leakage through unsanctioned AI tools

e.g., screenshots, client notes, or source code pasted into public models. - Shadow data pipelines

Employees using AI tools to “clean,” “translate,” or “summarize” sensitive datasets. - Model integrity risks

AI-generated content injected into systems without validation, leading to compliance drift. - Prompt-based social engineering

Users coaxed into revealing internal details (“just paste your config here”). - Cross-border data movement

AI tools processing data in jurisdictions your compliance team never approved.

Every organization recognizes these patterns — few have visibility into them.

Assess Your Secure AI Readiness (3Minute SelfAssessment)

Even the most mature organizations struggle to answer a simple question:

“How ready are we to adopt AI securely, at scale?”

To make that tangible, we’ve created a short Secure AI Readiness SelfAssessment for technology and risk leaders. In under three minutes, you’ll get:

- A readiness score across six critical dimensions (visibility, policy, data protection, endpoint, monitoring, governance)

- A simple maturity band (At Risk / Emerging / Advancing / Leading)

- Clear next steps to close the gap — including where a Secure AI Workshop can accelerate your roadmap

Guardrails, Not Handcuffs

The future isn’t about banning AI.

It’s about enabling your teams to innovate — safely, quickly, and responsibly.

Shadow AI will continue to grow.

The question is whether you can see it, govern it, and secure it before it becomes your next breach headline.

Your AI strategy isn’t complete until your AI security strategy is.

If you’d like to explore these ideas further, join our upcoming, Celestial Systems Open House – A chance to meet our team, see our latest solutions in action, and discuss how AI can be applied in your own organization.

We’d love for you to join the conversation.